The Logical Data Warehouse as a transmission and processing layer for IoT

Internet has been a total revolution in our lives. We are always connected and will be even more connected in the future.Today’s Humans could live without electricity, but now he can no longer survive without it. The digital natives (and not only) depends from the network as if it were pure energy. It’s enough to see what happens in our offices or in our homes once we have connection problems. This dependence is a child of the progress. A progress that will bring us new paradigms and new technological challenges. In the book “Hit Refresh” Microsoft’s Ceo Satya Nadella identifies the three technological trends for the near future: augmented reality, cloud and quantum computing. Perhaps we should add the Internet to the things that its directly linked to these three emerging technologies. The Internet of Things is a new paradigm in which computer and network capabilities are embedded in any type of object. We will be able to use these capabilities to query the status of the object and to change its status if possible. A new type of world in which we can almost connect to the network and use them collaboratively to perform complex tasks that require a high degree of intelligence and where the sum of all this data in real time opens up new scenarios.

The IoT represents intelligence and interconnection as IO devices are equipped with integrated sensors, actuators, processors and transceivers. IoT is not a single technology, but rather an agglomeration of several technologies working together. The actors of this technology are essentially the sensors that rest on the object and can communicate different data such as temperature, pulsations, humidity, weight, speed and anything that a sensor can capture. Another key factor is the actuators whose task is to act on the object’s state once it receives a remote command. An actuator opens doors, turns off lights, starts a washing machine and any other action the object allows. These two actors are not “intelligent” because they need two more elements that are the basis of this article: calculation and communication. The calculation represents the programming of the actuators in reference to the sensors (If a particular condition is met then act in this way).

Communication is even more important as it is a true neurotransmitter of the order. An IoT system needs stability, scalability and security. All powerful software needs something more to operate in this complex environment. First, we need middleware that can be used to connect and manage all these heterogeneous components.

We need a lot of standardization to connect many different devices. We need a data orchestrator who can understand and manage different data and give precise orders. Logical Data Warehouse technology lends itself perfectly to this mission. Liftron’s logical layer can be the perfect solution for IoT with large amounts of data because it ensures: the use of different real-time data sources, a calculation speed that is supported by Apache Spark as a query engine and full T- SQL support. IoT finds diverse applications in healthcare, physical conditioning, education, entertainment, social life, energy conservation, environmental monitoring, home automation and transport systems, the spread of ever more present and ever faster network infrastructure and the ever- increasing computing capacity and consequently the response capacity of computers will allow for a hyperbolic diffusion of these devices.

There is no single consensus on the architecture of IoT, as different architectures have been proposed by different researchers.

Three- and five-layer architectures

The most basic architecture in the world of IoT is a three-layer architecture and was introduced in the early stages of research in this area

The perception’s layer: Is the physical layer, which has sensors to detect and gather information about the environment. It detects some physical parameters or detects other intelligent objects in the environment.

The network layer: The network layer is responsible for connecting to other intelligent stuff, network devices and servers. Its features are also used to transmit and process sensor data. It is the most neuralgic part of the whole process and it is where Lyftrondata acts as an orchestrator of the data coming from the different “receptors”.

The application layer: is responsible for providing the application specific services to the user. It defines several applications where IoT can be deployed, e.g. smart homes, smart cities and smart health.

The five-layer IoT architecture adds the process layer and the business layer. In this case, Lyftrondata and its Logical Data Warehouse technology would be present in the processing layer if it did not simply transmit and connect data (without copying it due to the very nature of the LDW).

Transforming Events into Information

Raw events

Complex events

In the first case, it is almost always raw information on a single activity reported from a device that is sent to an event stream. These raw events contain only limited information from a single device (device id, action, measured as movement temperature, cardio frequency etc.).

In the second case, complex events are provided by multiple events (e.g. more than 10 events with a temperature above 50 degrees in 1 hour means overheating). Complex events can be based on a series of events from the same device, but can also depend on multiple events from different devices. (example: the same user has performed actions on two events: the same passenger entered one metro station and left in another. Complex events can be related to information about users or devices stored in other databases so the flow of information is a critical factor, requiring high availability and speed of response.

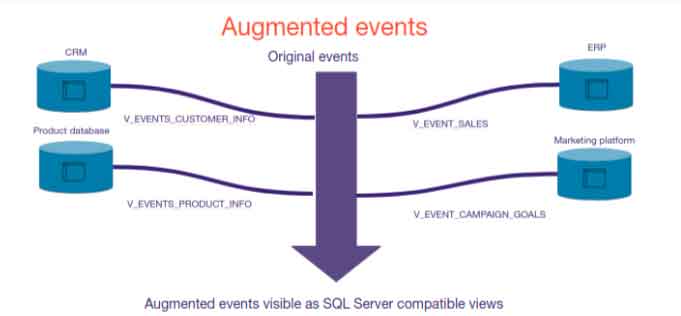

Event Augmentation

Adding information from one event and relating it to other information from other databases is called event augmentation. For example, always in the case of the metro: an event from a metro station when a person enters the door and uses his travel card will only contain the door identifier (device), timestamp and travel card identifier. But the data relating to the traveller will be found in another database. Additional information on the travel card is stored in the passenger database. And this is where we can use Lyftrondata and its LDW technology to link event stream events to our passenger database.

Contexts for the use of IoT

- Real-time reaction: When the system must react to particular events or a sequence of events in real time or near real time. Imagine a subway line where 10,000 people entered the same station in one hour and that means the station is overloaded. In this case we are in front of “complex events” that are carried out from a special sequence of multiple smaller events.

- Event pattern detection (data science): Analysis of all historical events to find “complex events” and predict behaviours or reactions. In this case data analysts should have access to a history of events that can be re- analyzed to find interesting patterns or complex events and should be able to link raw events with additional information from other event flows or with additional information from databases.

This requires some agility in handling data flows because data analysts must be free to analyze any data flow from the last day, week, month or year to detect complex events. Normally the raw data received from the devices is stored on streaming platforms such as Apache Kafka, which is not designed to be consulted by means of complex SQL sentences, but thanks to Lyftrondata can have this possibility.

A mountain of data…. two architectures

These sensors generate a great volume of data that can only be used one time. The problem with IoT is not the same as with Big Data. No storage speed is required as these data will lose their value within a few days. In other words, device data is sent out as an endless stream of raw events that require rapid interpretation by the processing layer. The events contain basic information such as device identification, action, date and time. To achieve certain business objectives it is necessary to efficiently manage queries by interacting with data storage. This is why there are two important data processing architectures that serve as the backbone of IoT applications: Lambda and Kappa.

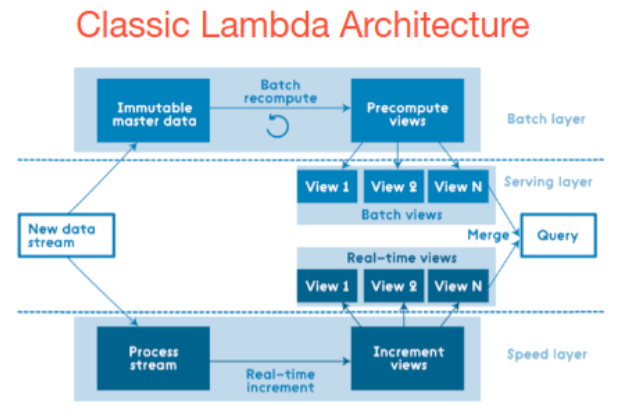

Lambda Architecture

Lambda architecture is a data processing technique that is capable of handling large amounts of data efficiently. The efficiency of this architecture is evident in the form of higher performance, lower latency and negligible errors.

How it works: Events are processed in two separate pipeline, a velocity layer uses a streaming pipeline engine of events such as Apache Flink or Apache Storm. Normally there is a batch layer that is usually a Hadoop cluster with a copy of all events stored in HDFS or S3 and the events are processed in batches. The most important feature of this architecture is that a separate “speed layer” allows for real-time reaction to events. Now we see some points:

- Two similar implementations of complex event detection must be implemented using different tools.

- Not all use cases require a real reaction in real time, a human being can take action when an event is detected, but a person can be busy and take action an hour later so possible human interaction is limited.

- When using Lambda

When user queries are required to be handled ad-hoc using immutable data storage. - When quick responses are required and the system must be able to handle multiple updates in the form of new data streams at once.

- When none of the stored records should be deleted and updates and new data need to be added to the database. (information over time does not lose value).

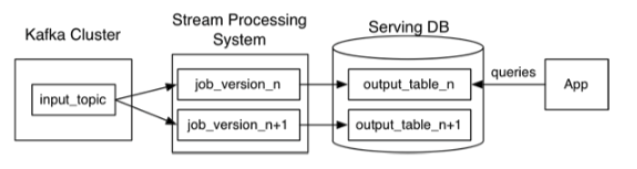

Kappa Architecture

A discussion began in 2014 by Jay Kreps in which he pointed outsome discrepancies in lambda architecture that led some specialists to an alternative architecture that used fewer code resources and was able to work well in certain business scenarios where the use of multiple layers of lambda architecture did not seem the best solution.

Kappa Architecture cannot be considered a substitute for lambda architecture, but rather an alternative to be used in circumstances where the active performance of the load layer is not necessary to meet the quality of service. This architecture

finds its applications in the real-time processing of different events. Here are some features:

- Implementation is much easier without duplicating the complex event detection logic.

- Applicable in applications that involve a human reaction and do not require a real reaction in real time as a batch detection of events every 10 minutes is sufficient.

- Batch processing of events on a large data platform (such as Querona) allows for more options to relate or link events to internal company data and this data must be provided in some way (this is where Querona helps).

- Multiple data events or queries are logged in a queue to be handled against a distributed or historical file storage system.

- Sequence processing platforms can interact with the database at any time as the order of events and queries is not predetermined.

- The architecture is robust and highly available, requiring Terabytes of storage for each node of the system to support replication.